OpenClaw Byterover v2026.4X just launched with three specific features that collectively transform what AI agents can do.

Individual features in AI updates usually don't move the needle much.

A better prompt template here, a bug fix there.

OpenClaw Byterover is different.

Three features that individually would be significant.

Together, they reshape what persistent AI memory looks like.

And they all work with 92% retrieval accuracy — real numbers, not marketing.

Let me walk through each feature in detail and show you why they matter.

Video notes + links to the tools 👉

OpenClaw Byterover Feature One: The Context Engine

The context engine is the part of OpenClaw Byterover that makes your agent feel smart.

What It Does

Before your agent executes any task, the context engine scans your knowledge tree for relevant memories.

It pulls the right information.

Then it injects that context into the prompt before the agent acts.

Why This Matters

Traditional AI agents are reactive.

They respond to whatever's in the current prompt.

If you forget to include context, they don't have it.

The context engine is proactive.

It anticipates what the agent needs and loads it automatically.

Practical Example

You ask: "Draft a client proposal for Jane's marketing business."

Without context engine:

- Generic proposal template

- Uses default tone

- Uses default pricing

- Doesn't reference previous interactions with Jane

With context engine:

- Loads Jane's specific preferences (she prefers detailed scope sections)

- Loads previous proposals to her industry

- Loads your standard pricing for her service tier

- Loads any relevant conversation history

- Drafts proposal tailored to Jane specifically

The difference is night and day.

The Learning Loop

After your agent completes the task, the context engine saves new insights.

If Jane responded positively, that pattern is saved.

If she rejected certain phrasings, those are marked.

Every task teaches the context engine what works for each specific context.

OpenClaw Byterover Feature Two: Automatic Memory Flush

This is the unsung hero of OpenClaw Byterover.

The Problem It Solves

AI agents have context windows — a maximum amount of "short-term memory" they can hold.

When the window fills up, older content gets pushed out.

Historically, important information gets lost with the routine content.

What the Automatic Memory Flush Does

When the context window approaches full, Byterover steps in.

It reviews everything in the window and categorises:

- Important architectural decisions → Save to knowledge tree

- Successful patterns → Save to knowledge tree

- Bug fixes and resolutions → Save to knowledge tree

- Your preferences revealed in conversation → Save to knowledge tree

- Routine back-and-forth → Discard safely

Nothing important gets lost.

Only the noise gets flushed.

Why This Enables Long-Term Relationships

Without automatic memory flush, AI agents can't maintain long-term context.

Week-long projects? Forget it.

Month-long client relationships? Impossible.

Year-long business operations? Not happening.

With automatic memory flush, none of those are problems anymore.

You can work with your agent on projects lasting months or years, and the important context persists.

Real-World Impact

One of my community members set up OpenClaw Byterover for their customer support.

Week 1: Agent learned basic policies.

Week 4: Agent had internalised edge cases from handled tickets.

Week 12: Agent handled 80% of support autonomously without losing context.

Month 6: Agent was training new team members on the established SOPs.

This compound effect only works when memory flush protects important learning.

🔥 Want to set up OpenClaw Byterover's memory flush for maximum business value?

Inside the AI Profit Boardroom, I share my exact memory flush configurations — what to prioritise saving, how to structure the knowledge tree, and the categorisation rules that deliver the best accuracy. Plus the 6-hour OpenClaw course has been updated for v2026.4X. 3,000+ members running sophisticated memory setups.

OpenClaw Byterover Feature Three: Daily Knowledge Mining

This is where OpenClaw Byterover feels genuinely magical.

What Daily Knowledge Mining Does

Every morning at 9am, a cron job runs the BRV curate command.

This command:

- Scans your recent notes and memory entries

- Identifies repeated patterns across them

- Extracts high-value insights

- Categorises them into the knowledge tree

- Consolidates redundant information

- Rebuilds retrieval indexes

Your agent genuinely gets smarter every night while you sleep.

Why Scheduled Curation Matters

You could manually organise your agent's memory.

But you won't.

Nobody does.

Automated daily curation ensures memory quality stays high without requiring human effort.

Think of it as your agent having a personal assistant who tidies up its filing system every morning.

The Compound Effect of Daily Mining

- Day 1: Knowledge tree has raw notes from one day

- Day 7: Patterns starting to emerge, duplicates consolidated

- Day 30: Clear structure, obvious high-value insights, noise removed

- Day 90: Genuinely useful business knowledge base

- Day 365: Irreplaceable business asset with deep institutional knowledge

Without daily mining, the knowledge tree would become messy logs.

With daily mining, it becomes a structured, queryable knowledge system.

Customising the Mining Schedule

Default is 9am daily.

You can configure:

- Different times if 9am doesn't fit your workflow

- Multiple daily runs if you need more frequent curation

- Weekly vs daily for lighter usage

- Custom

BRV curateparameters for specific needs

For most users, the default 9am daily is ideal.

The 92% Accuracy That Ties It All Together

Three features that work together produce results none could deliver alone.

- Context engine ensures the right memories get loaded

- Automatic memory flush ensures important memories don't get lost

- Daily knowledge mining ensures memories stay organised and retrievable

Together, they produce 92-92.2% retrieval accuracy on long-term memory tasks.

That number is 2x better than standard vector-based AI memory systems.

And 3x better than basic file-based AI memory.

For business use cases, this accuracy difference is the difference between "useful tool" and "genuine asset."

Learn how I make these videos 👉

How the Features Compare to Pre-Byterover OpenClaw

If you've been using OpenClaw before Byterover, here's what's changed:

Memory Handling

Before Byterover:

- Basic file-based memory

- Manual context injection required

- Context window limits caused loss

- No active organisation

With Byterover:

- Sophisticated knowledge tree

- Automatic context injection

- Memory flush prevents loss

- Daily automated curation

Agent Capabilities

Before Byterover:

- Short-term memory only (current session)

- Forgets across sessions

- Each session essentially fresh

With Byterover:

- Long-term memory (weeks, months, years)

- Cross-session continuity

- Cumulative learning

Business Usability

Before Byterover:

- Required constant re-prompting

- Hard to scale beyond simple tasks

- Couldn't maintain long-running projects

With Byterover:

- Minimal re-prompting needed

- Scales to complex workflows

- Maintains month/year-long context

For context on the other major OpenClaw updates, my OpenClaw Opus 4.7 breakdown covers what came before Byterover.

First-Class Plugin Status Matters

One technical detail worth highlighting.

OpenClaw made Byterover a first-class plugin in v2026.4X.

This means:

- Official integration (not a community hack)

- Guaranteed compatibility with future OpenClaw updates

- Priority support for bugs and issues

- Feature parity with core OpenClaw functionality

- Stable API that won't break randomly

Investment in Byterover won't be wasted by a sudden compatibility break.

This is the difference between hobby-grade plugins and production-ready tools.

When to Use Each Feature

Not every use case needs all three features equally.

Context Engine Most Important For:

- Multi-client work where each client has different preferences

- Content work with specific brand voice

- Client projects spanning weeks

- Technical work with architectural consistency needs

Memory Flush Most Important For:

- Long sessions with complex ongoing tasks

- Work generating lots of context (debugging, research)

- Multi-step projects where details accumulate

- Any use where sessions routinely hit context limits

Daily Knowledge Mining Most Important For:

- Business operations with repeated patterns

- Content work where learnings compound

- Any scenario where you want the agent to "get better" over time

- Long-term projects measured in months

In practice, all three features benefit most use cases.

Don't turn any off unless you have a very specific reason.

🔥 Setting up OpenClaw Byterover properly for your specific use case?

Inside the AI Profit Boardroom, I help members configure each Byterover feature optimally for their specific business. Content ops, customer support, lead gen, technical work — each has different memory priorities. Weekly coaching calls to review your setup. 3,000+ members building tailored AI memory systems.

Comparing Byterover to Other Memory Solutions

A few alternatives exist for AI agent memory:

MemGPT / Similar Systems

- Good memory management

- Not as deeply integrated as Byterover with OpenClaw

- Requires more manual setup

- No daily mining equivalent

Vector Databases (Pinecone, Weaviate, etc.)

- Flexible but need custom integration work

- Retrieval accuracy typically 40-60%

- No automated curation

- Developer-heavy to set up properly

Basic File-Based Memory

- Simple but unreliable retrieval

- No structure without manual effort

- Falls apart at scale

- Used in many DIY AI agent setups

Byterover

- Purpose-built for OpenClaw

- 92% retrieval accuracy

- Automated curation

- First-class plugin support

- Works out of the box

For OpenClaw users specifically, Byterover is the clear winner.

For non-OpenClaw users, you'll need to evaluate alternatives based on your stack.

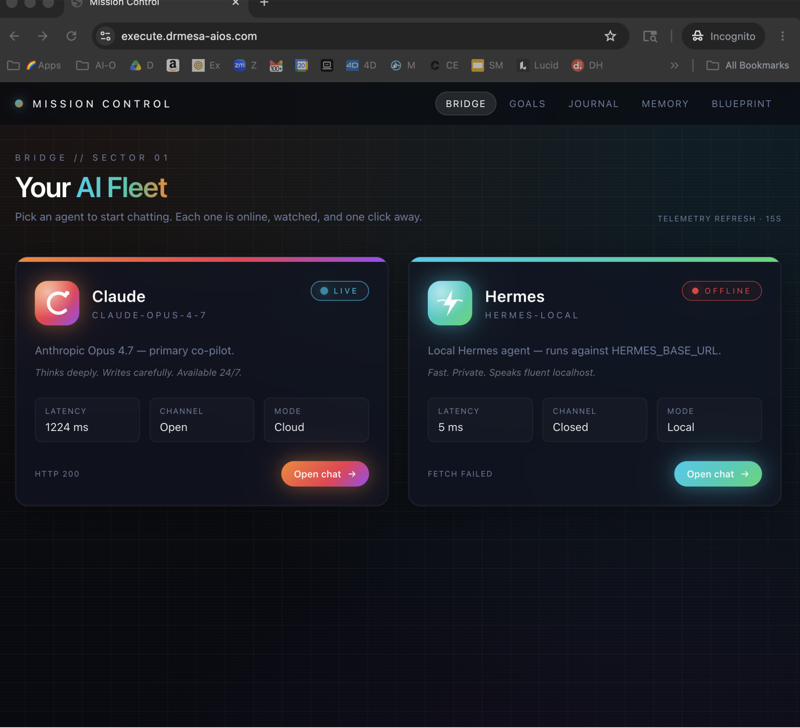

My Hermes VS OpenClaw comparison covers the broader ecosystem choice — Byterover is a strong argument in OpenClaw's favour.

OpenClaw Byterover: Frequently Asked Questions

Can I use OpenClaw Byterover without the context engine?

Yes, you can disable individual features. But the features work best together. Disabling the context engine significantly reduces the value of the memory system.

How much data can Byterover handle in the knowledge tree?

Practically unlimited for typical business use. The daily mining process keeps things organised regardless of scale. Some users have tested with millions of entries without issues.

Is the 9am daily mining schedule customisable?

Yes, fully customisable. Change to whatever time or frequency fits your workflow. Daily at 9am is just the default.

What happens if daily mining fails one day?

The next day's run will catch up. Missed curation doesn't lose data — it just means organisation is slightly behind. The system is resilient to occasional failures.

Can I run Byterover on a remote server?

Yes. Byterover supports both local and remote deployments. For teams or always-on setups, a remote server is often ideal.

Will Byterover be ported to other AI agents like Hermes?

Currently Byterover is specifically integrated with OpenClaw as a first-class plugin. Whether it will port to other agents is up to the Byterover team — but the deep OpenClaw integration is a key part of its current value.

Related Reading

Deepen your OpenClaw setup with these:

- OpenClaw Opus 4.7: The AI agent upgrade — pre-Byterover major update

- Ollama + Hermes: Free AI agent setup — alternative AI agent ecosystem

- Claude Code AI SEO: End-to-end automation — Claude Code as another option

- Claude Opus 4.7 AI SEO breakdown — model-level capabilities

OpenClaw Byterover's three features work together to deliver persistent AI memory that genuinely compounds value over time — and if you want the most sophisticated AI agent memory available in 2026, the answer is OpenClaw Byterover.