Claude Code Local is the most interesting open-source AI coding project I've tested in months — and this is the complete guide to getting maximum value from it.

Claude Code Local runs Claude Code with free local models instead of Anthropic's API.

You get:

- Zero subscription costs

- Complete privacy

- Offline capability

- Unlimited usage

This guide covers installation, model selection, performance optimisation, and practical use.

Video notes + links to the tools 👉

Section 1: Understanding Claude Code Local

The Core Concept

Claude Code Local is an open-source wrapper that swaps Anthropic's API for local Ollama inference.

Same Claude Code experience.

Different underlying model source.

Why This Matters

Not everyone can or wants to pay for cloud AI.

Privacy concerns exist.

Rate limits frustrate high-volume users.

Offline work sometimes required.

Local inference solves all of these.

The Project's Origin

Built by the open-source community.

Active development.

Freely available on GitHub.

Section 2: Installation Walkthrough

Prerequisites

- macOS, Linux, or Windows

- 16GB RAM minimum (32GB comfortable)

- 50GB+ free disk space

- Decent CPU (Apple Silicon ideal)

Step 1: Install Ollama

Visit ollama.com and follow platform-specific instructions.

On macOS:

curl -fsSL https://ollama.com/install.sh | sh

Step 2: Download Models

Start with one:

ollama pull qwen3.5

Add more later:

ollama pull gemma4

ollama pull llama3.3

Step 3: Install Claude Code Local

From the GitHub project page, copy the quickstart commands.

Paste into your current Claude Code session.

Claude handles the setup.

Step 4: Test

Run a basic test:

claude-code-local "Write a simple hello world in Python"

If it responds, you're good.

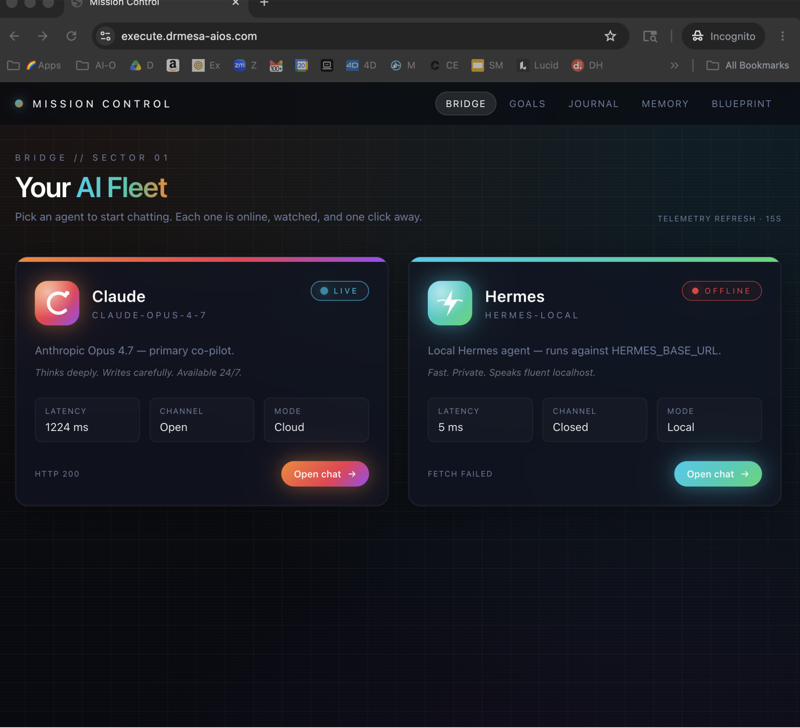

My Ollama + Hermes setup covers similar foundational concepts.

Section 3: Model Selection Matrix

Choose Qwen 3.5 If:

- You have 32GB+ RAM

- Output quality matters most

- You're doing complex coding work

- You can accept slower response times

Choose Gemma 4 If:

- Hardware is constrained

- Speed matters most

- Tasks are relatively simple

- You prioritise responsiveness

Choose Llama 3.3 If:

- You want stability/reliability

- Middle-ground performance is fine

- You're comfortable with well-tested models

- You want good community support

Switching Between Models

claude-code-local --model qwen3.5 "complex task"

claude-code-local --model gemma4 "quick task"

claude-code-local --model llama3.3 "general task"

Switch anytime based on need.

🔥 Want my complete Claude Code Local configuration?

Inside the AI Profit Boardroom, I share optimised configurations for different hardware tiers. M1/M2/M3/M4 Macs, various GPU setups, modest hardware. Performance tips, model selection frameworks, cost optimisation. 3,000+ members running optimised setups.

Section 4: Claude Code Local Performance Optimisation

Hardware Tips

- Apple Silicon Mac: Best out-of-the-box experience

- NVIDIA GPU: Great if you have one

- AMD GPU: Workable but less optimised

- CPU-only: Possible but slow

Memory Management

- Close unnecessary applications

- Keep at least 8GB free for other processes

- Monitor with Activity Monitor/Task Manager

Speed Tuning

- Use smaller quantised models (Q4) if speed matters

- Larger quantised models (Q8) for quality

- Consider context window — larger = slower

Common Bottlenecks

- Disk I/O: SSD essential

- Memory swap: Disable if possible

- CPU contention: Run Claude Code Local dedicated

Section 5: Use Case Deep Dives

Use Case A: Private Client Projects

Setup: Claude Code Local with Qwen 3.5.

Workflow:

- Client code stays local

- No API calls to external services

- Full Claude Code functionality

- NDA-respectful workflow

Use Case B: High-Volume Generation

Setup: Gemma 4 for speed.

Workflow:

- Generate many code comments

- Quick documentation

- Batch processing

- Unlimited iterations

Use Case C: Offline Development

Setup: Multiple models cached locally.

Workflow:

- Work on flights/trains

- Remote locations with poor internet

- Full development capability preserved

Use Case D: Learning/Teaching

Setup: Claude Code Local deployed for team.

Workflow:

- Students learn without API concerns

- Unlimited practice time

- No quota exhaustion mid-lesson

Section 6: Integration With Development Workflows

VS Code Integration

- Install Ollama extension

- Configure Claude Code Local as external tool

- Bind to keyboard shortcuts

Command-Line Workflows

- Alias for frequent commands

- Shell functions for common patterns

- Git hook integration

IDE Integrations

- JetBrains IDEs via external tools

- Neovim plugins

- Emacs configurations

Learn how I make these videos 👉

Section 7: Advanced Techniques

Running Multiple Models Simultaneously

Ollama handles this natively.

Different Claude Code Local instances can use different models concurrently.

Custom Model Fine-Tuning

Advanced users can fine-tune local models on their specific codebases.

Dramatically improves quality for your particular patterns.

Embedding Models for Context

Run embedding models alongside chat models.

Better retrieval of relevant code context.

Prompt Caching

Ollama caches frequently-used prompts.

Improves response time for similar queries.

Section 8: Limitations and Workarounds

Limitation 1: Complex Reasoning

Local models can struggle with complex multi-step reasoning.

Workaround: Keep cloud Claude subscription as occasional fallback.

Limitation 2: Long Context

Some local models have shorter context windows.

Workaround: Use Qwen 3.5 (128K context) for long-context needs.

Limitation 3: Very Recent Information

Local models have training cutoffs.

Workaround: Combine with search APIs for current information.

Limitation 4: Specialised Knowledge

Highly specialised domains may have gaps.

Workaround: Fine-tune on your domain or use cloud for edge cases.

Section 9: Speech Feature

One unique Claude Code Local capability: speech integration.

What It Does

Text-to-speech integration lets you hear responses.

Use Cases

- Accessibility

- Audio code review

- Hands-free work

- Learning via listening

Setup

Speech features require additional configuration.

Check project documentation for platform specifics.

🔥 Master Claude Code Local advanced features

Inside the AI Profit Boardroom, I cover power-user features of Claude Code Local — speech integration, fine-tuning, multi-model workflows, embedding pipelines. Take your local AI coding to expert level.

Section 10: When to Use Local vs Cloud

Choose Local When

- Privacy is paramount

- High-volume usage

- Offline work needed

- Cost-conscious

- Experimenting/learning

Choose Cloud When

- Best possible quality needed

- Complex reasoning required

- Time-sensitive work

- Low-volume premium tasks

Hybrid Approach

Best setup: both available, switched by context.

Most experienced users have both.

Claude Code Local: Frequently Asked Questions

Is this legal?

Yes, completely. Ollama and the local models are open-source. Claude Code Local is open-source.

Does it violate Anthropic's terms?

No — it's not using Anthropic's API. It's a separate project using different models.

Can I use multiple models together?

Yes, switch between them as needed.

How's the quality compared to cloud Claude?

Qwen 3.5 is ~85-90% of Claude Opus for most coding tasks. Gemma 4 is ~65-75%.

Is Claude Code Local production-ready?

For most use cases, yes. Test your specific workflows first.

How do updates work?

Pull new model versions via Ollama. Update Claude Code Local via GitHub.

Related Reading

- Ollama + Hermes: Local AI foundation

- Claude Code AI SEO: Cloud-based workflows

- Hermes VS OpenClaw: Agent ecosystem comparison

- Claude Opus 4.7 AI SEO: Model capability comparison

This complete guide to Claude Code Local should equip you to deploy it successfully — and for privacy, cost, and freedom, Claude Code Local is increasingly the right choice in 2026.