Claude Code free via Ollama is one of the most valuable developer discoveries of 2026 — and this is the complete guide to using it properly.

Everything you need:

- Installation walkthrough

- Model selection framework

- Performance optimisation

- Business deployment patterns

- Limit management

- Quality comparisons

Let me cover it all.

Video notes + links to the tools 👉

Chapter 1: Understanding The Claude Code Free Setup

What Makes Claude Code Free

Claude Code is architected to support multiple model providers.

Ollama is one such provider.

Ollama offers free access to various cloud and local models.

Result: use Claude Code with free Ollama models → free Claude Code.

Why This Works

Not a hack.

Officially supported path.

Completely legitimate.

Who Built Each Part

- Claude Code: Anthropic

- Ollama: Ollama team (separate company)

- Cloud models (GLM 5.1, etc.): Various providers partnering with Ollama

- Local models (Gemma 4, etc.): Various open-source providers

The Ecosystem

Healthy open ecosystem of AI tools.

Users benefit from competition and openness.

Chapter 2: Installation

Installing Ollama

# macOS

curl -fsSL https://ollama.com/install.sh | sh

# Linux

curl -fsSL https://ollama.com/install.sh | sh

# Windows

# Download installer from ollama.com

Verifying Installation

ollama --version

Should show version number.

Pulling Models

# Cloud models (fast, free with limits)

ollama pull glm5.1-cloud

ollama pull qwen3.5-cloud

ollama pull kimmy-k2.5-cloud

# Local models (slower, unlimited free)

ollama pull gemma4

ollama pull qwen3.5

ollama pull llama3.3

Launching Claude Code With Ollama Model

ollama run glm5.1-cloud

This starts Claude Code with the specified model.

First Verification

Type a test prompt:

Hello, are you working?

If the model responds, you're set.

Chapter 3: Model Selection

For Most Users: GLM 5.1 Cloud

Best balance of:

- Speed (fast)

- Quality (very good)

- Cost (free within limits)

Recommended starting point.

For Quality-Critical Work: Qwen 3.5 Cloud

Best free quality.

Slower than GLM.

Free within limits.

For Unlimited Use: Gemma 4 Local

Always free.

Good for simple tasks.

Fast on capable hardware.

For Balanced Local: Qwen 3.5 Local

Best local quality.

Needs substantial RAM.

Moderate speed.

Switching Strategies

Start with GLM 5.1 Cloud.

Switch to local when hitting limits.

Use Qwen 3.5 for complex tasks requiring quality.

🔥 Master Claude Code Free with optimal configurations

Inside the AI Profit Boardroom, I share hardware-specific configurations — exact settings for M1/M2/M3/M4 Macs, various GPUs, different hardware tiers. Plus model selection matrices for various use cases.

Chapter 4: Managing Claude Code Free Tier Limits

Understanding Limits

Ollama's free cloud tier has token/request limits.

Specifics change — check Ollama's current documentation.

Generally generous for individual developers.

Monitoring Usage

Ollama provides usage indicators.

Pay attention to stay within limits.

Strategies to Stay Free

Strategy 1: Cloud for Quality, Local for Volume

Use cloud models for complex/quality-sensitive work.

Use local models for high-volume routine work.

Strategy 2: Rotate Cloud Models

GLM 5.1 hitting limits? Switch to Qwen 3.5 Cloud.

Distribute usage across multiple cloud models.

Strategy 3: Scheduled Tasks

Queue heavy work during off-peak hours.

Better performance and potentially different limit pools.

Strategy 4: Local Fallback

Always have local models ready for when cloud limits hit.

When to Consider Paid Tiers

If consistently hitting limits:

- Still cheaper than Anthropic direct

- Worth considering small paid Ollama tier

- Or invest in better local model hardware

Chapter 5: Performance Optimisation

Speed Tips

- Use cloud models when speed matters

- Optimise prompt length

- Cache common queries when possible

- Close unused applications

Quality Tips

- Use Qwen 3.5 for reasoning tasks

- Provide clear context

- Iterate on prompts that underperform

- Break complex tasks into steps

Hardware Optimisation

For Local Models:

- Apple Silicon Mac recommended

- 32GB+ RAM ideal

- NVMe SSD for model loading

- Avoid running on battery

For Cloud Models:

- Fast internet helps

- Reliable connection matters

- Low-latency network location

Chapter 6: Real Use Cases

Development Work

Standard coding workflows.

Code review and suggestions.

Test generation.

Documentation.

Content Operations

My Claude Code AI SEO approach works perfectly with free Claude Code.

Generate articles at scale for essentially zero cost.

Research

Analysis of documents.

Trend tracking.

Competitive intelligence.

Data Processing

Structured data extraction.

Format conversions.

Quality checking.

Customer Interactions

Support chatbots.

Email drafting.

Customer success operations.

Chapter 7: Integration With Other Tools

With Claude Desktop

Claude Code Free integrates naturally.

Workflow unchanged.

Just different model provider.

With VS Code

Extension configuration specifies Ollama endpoint.

Standard development experience.

With JetBrains IDEs

External tool configuration for Claude Code Free.

Bind to keyboard shortcuts.

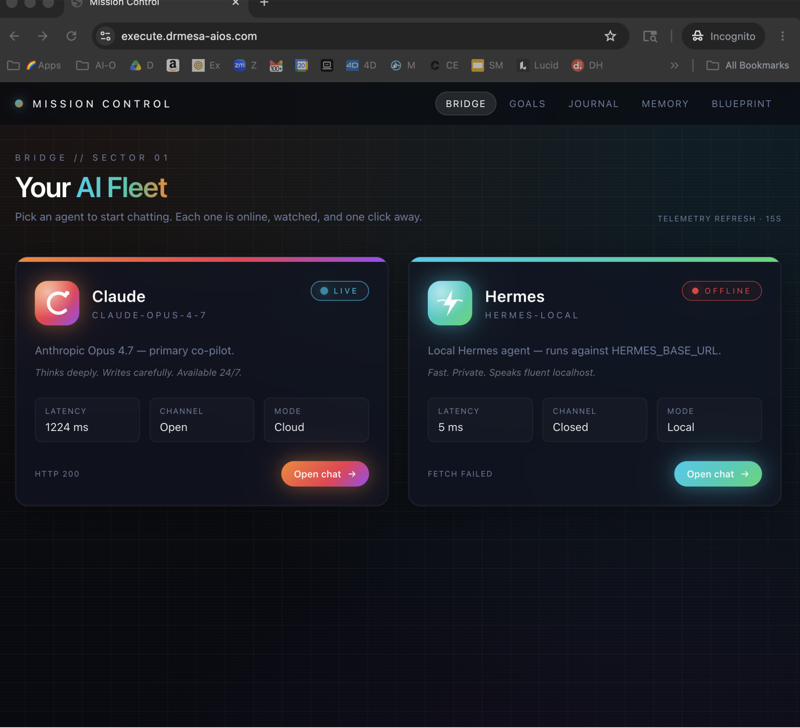

With Automation Tools

Pair with:

- Hermes Agent Workspace for agent orchestration

- OpenClaw Byterover for persistent memory

- Ollama + Hermes for broader Hermes capabilities

Learn how I make these videos 👉

Chapter 8: Business Deployment

For Individual Developers

Simplest deployment.

Install locally.

Use daily.

Save subscription costs.

For Small Teams

Shared Ollama instance on team server.

Everyone connects.

Centralised management.

For Agencies

Client-specific deployments.

Isolated instances per client.

Better for compliance.

For Enterprises

Multiple Ollama instances.

Enterprise authentication.

Audit logging.

Integration with existing tools.

Chapter 9: Privacy and Security

Cloud Models

Your code sent to provider.

Standard cloud security applies.

Check provider's privacy policy.

Local Models

Complete privacy.

Nothing leaves your machine.

Best for sensitive work.

Hybrid Approach

Public code → cloud models.

Private code → local models.

Policies by project.

Compliance Considerations

Local models often preferable for:

- GDPR compliance

- HIPAA compliance

- Financial services regulations

- Custom enterprise requirements

🔥 Deploy Claude Code Free securely for any organisation

Inside the AI Profit Boardroom, I cover security hardening for Claude Code Free deployments. Authentication, network security, audit procedures, compliance frameworks. Enterprise-grade free AI setup.

Chapter 10: Comparing Quality

Benchmark-Style Comparison

Paid Claude Opus 4.7: 100% quality baseline.

Free GLM 5.1 Cloud: 85-90%.

Free Qwen 3.5 Cloud: 85-92%.

Free Qwen 3.5 Local: 80-87%.

Free Gemma 4 Local: 65-75%.

Practical Quality

For 90% of coding tasks, all free options work well.

For complex reasoning, Qwen 3.5 shines.

For routine work, Gemma 4 is plenty.

When Paid Justifies Itself

Elite-quality work where 10% quality difference matters commercially.

Time-sensitive deadlines where paid speed helps.

Complex multi-step workflows where paid reliability matters.

Otherwise: free saves you thousands without meaningful quality loss.

Claude Code Free: Frequently Asked Questions

Is this actually free or just cheap?

Genuinely free within Ollama's limits. Local models always completely free.

How much can I save annually?

Typical individual: £240-2,400/year. Teams: much more.

Can I combine with paid Claude?

Yes. Hybrid approach works well.

Is this sustainable long-term?

Ollama's free tier structure continues. Open-source foundations ensure free options always exist.

What happens if I exceed cloud limits?

Switch to local models. Or pay small amount for continued cloud access.

Is this quality sufficient for professional work?

For most professional work, yes. For elite-quality work, verify with your specific tasks.

Related Reading

- Claude Code Local: Local-only approach

- Ollama + Hermes: Ollama ecosystem introduction

- Claude Code AI SEO: Content automation

- Hermes Agent Workspace: Paired agent tool

This complete guide to Claude Code free should equip you to deploy it successfully — and for anyone using Claude Code regularly, Claude Code free is the cost optimisation worth implementing immediately.