This deepseek v4 tutorial is the one I wish someone sent me before I wasted an hour trying to figure out which mode does what.

Let me save you the learning curve.

DeepSeek V4 launched the exact same day as GPT 5.5.

That alone tells you the Chinese AI lab is not messing around anymore.

Now let me break it all down — what it is, how to use it, and whether it actually deserves the hype.

Video notes + links to the tools 👉

DeepSeek V4 Tutorial — What DeepSeek V4 Is In One Paragraph

DeepSeek V4 is an open-source, mixture-of-experts (MoE) large language model released in two sizes — V4 Pro (1.6T params, 49B active) and V4 Flash (284B params, 13B active), both with a 1 million token context window, both available free on chat.deepseek.com, and both accessible via API at platform.deepseek.com.

That's the elevator pitch.

Now the details.

DeepSeek V4 Tutorial — The Modes You Need To Understand

DeepSeek V4 has multiple modes, and picking the wrong one will waste your time.

On chat.deepseek.com

- Instant mode — fast, non-think, great for quick answers

- Expert mode — slower, more careful

- Deep Think (optional toggle inside Expert) — reasoning chain up to 384K tokens

On the API at platform.deepseek.com

- Non-think — fastest, cheapest

- Think high — step-by-step reasoning

- Think max — maximum thinking budget

Important Deprecation Warning

The old deepseek-chat and deepseek-reasoner endpoints retire after July 24.

If you have scripts hitting those, migrate now.

Do not wait for your automations to break at midnight.

DeepSeek V4 Tutorial Step-By-Step: Your First Session

No complicated setup.

Just do this.

1. Open chat.deepseek.com

Log in with email or Google.

Free account gets you full access.

2. Pick Your Mode

Toggle at the top of the chat box.

- Quick question → Instant

- Serious task → Expert + Deep Think

3. Type Your Prompt

Same as any chat model.

System prompts work, context works, file uploads work.

4. Watch the Thinking Chain (If Deep Think)

In Deep Think, you see the reasoning unfold.

This is useful for debugging prompts.

If the model misunderstands, the thinking chain shows you where.

🔥 Want my DeepSeek V4 prompt library? Inside the AI Profit Boardroom, I've got the exact prompts I use for Deep Think mode, the system prompts for API agents, and a full comparison library for DeepSeek vs Claude vs GPT on the same tasks. 3,000+ members, weekly coaching, full course library. → Join the Boardroom

The Benchmarks DeepSeek Wants You To See

Let me give them to you straight, no spin.

Factual Accuracy — DeepSeek Wins

- DeepSeek V4: 57.9 on Simple QA Verified

- Claude Opus 4.6 Max: 46.2

- GPT 5.4 high: 45.3

If you want a factual lookup model, this is actually the best option.

Coding — DeepSeek Crushes Codeforces

- 93.5% solve rate

- Ranked 23rd against human competitive programmers

That 23rd ranking is bananas.

Graduate-Level Reasoning (MMLU Pro)

- V4 Pro: 87.5

- V4 Flash: 86.2

- Kimi K2.6: close behind

Apex Shortlist

- DeepSeek V4: 90.2%

My Honest Live Test Results

I ran two tests the moment I got access.

The Pong Game Test (Deep Think)

Asked it to build a Pong game with Deep Think mode on.

Reasoning chain: long and thoughtful.

Output: playable, but the paddle movement lagged.

Generation speed: slower than I wanted.

Verdict: functional, not polished.

The Landing Page Test (Instant Mode)

Asked for an AI SaaS landing page.

Output: clean HTML, boring design.

Felt dated compared to Claude Opus 4.7 output for AI SEO pages.

Also behind GPT 5.5 Pro on visual polish.

Coding UI-wise, DeepSeek V4 is not yet where Claude is.

Why DeepSeek V4 Is Architecturally Interesting

Quick tour of what's under the hood.

Compressed Sparse Attention

4 tokens compressed into 1 for attention operations.

Dramatically cuts memory.

Heavily Compressed Attention

128 tokens compressed to 1 on deeper layers.

Makes 1M context feasible without exploding VRAM.

Manifold Constrained Hyperconnections

Layers connect 4x more widely.

More cross-layer information flow.

Muon Optimizer

They ditched AdamW.

Muon is faster to converge, gives lower final loss.

32T Token Training

With progressive context extension:

- Start at 4K

- Extend to 16K

- Then 64K

- Finally 1M

This is cheaper than training at 1M from scratch.

Efficiency Stats — The Real Headline

Spec sheet highlights:

- V4 Pro: 27% of V3.2 compute, 10% of KV cache

- V4 Flash: 10% of compute, 7% of KV cache

For a bigger, smarter model, using a fraction of the compute — that's the story.

How to Run DeepSeek V4 Locally

Three steps.

LM Studio Route

- Download LM Studio

- Search "DeepSeek V4 Flash"

- Pick a quant that fits your VRAM (4-bit GGUF is usually the sweet spot)

- Load and chat

Hugging Face Route

- Go to

deepseek-ai/DeepSeek-V4-Flashon Hugging Face - Pull the weights

- Serve via vLLM, TGI, or llama.cpp

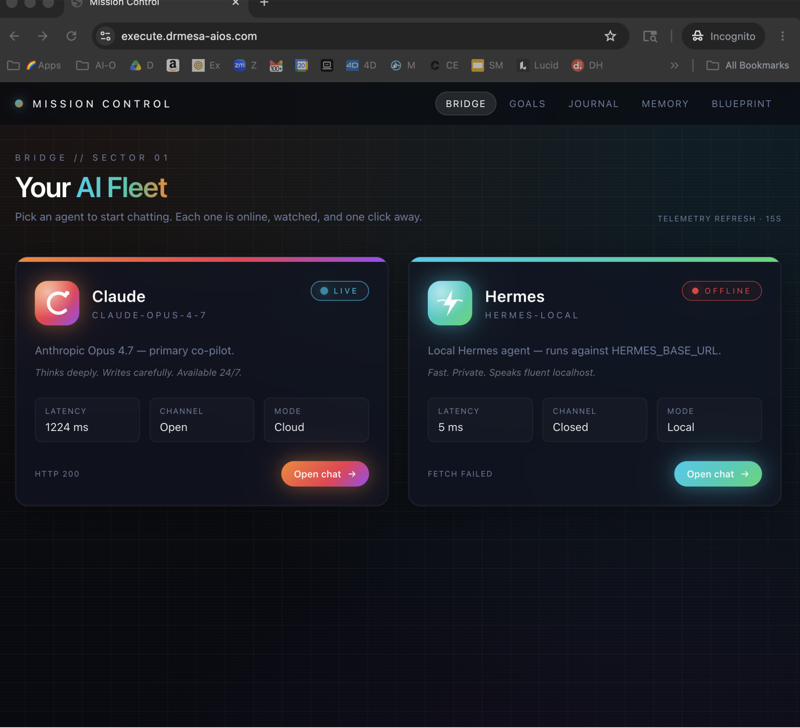

Pairs well with Ollama + Hermes for a full local stack.

When to Use DeepSeek V4 vs Alternatives

Clear picks.

Choose DeepSeek V4 If

- Cost is a major concern

- You're building agents at scale

- You need 1M context cheaply

- Factual accuracy matters more than creativity

- You want to self-host

Choose Claude Opus 4.6 If

- Output polish matters

- You're building for end users

- UI/design generation is important

Choose GPT 5.5 If

- You want best-in-class creative writing

- You need OpenAI ecosystem (Assistants, etc.)

- You're doing multimodal work

Choose Kimi K2.6 If

- You want agent swarms with strong open-source support

- You want a slightly different architecture to compare

FAQ

Is DeepSeek V4 free?

Yes — chat.deepseek.com is free.

API is paid but the cheapest of the major frontier models.

Self-hosted is free after hardware.

How do I access DeepSeek V4?

Three ways:

- chat.deepseek.com (web chat)

- platform.deepseek.com (API)

- LM Studio or Hugging Face (local)

What's the context window on DeepSeek V4?

1 million tokens on both Pro and Flash.

Is DeepSeek V4 better than Claude?

Better on factual benchmarks.

Worse on UI generation and creative polish in my testing.

What's the difference between Instant and Expert mode?

Instant is non-think, fast.

Expert is more careful, with optional Deep Think reasoning (up to 384K tokens).

Can I fine-tune DeepSeek V4?

Yes — it's open source. Weights are on Hugging Face.

Related Reading

- GPT 5.5 Pro — the OpenAI competitor launched the same day

- Claude Opus 4.7 AI SEO — the polish benchmark I compare against

- Kimi K2.6 agent swarms — another open-source option worth comparing

⚡ Build smarter agents, spend less on AI. Inside the AI Profit Boardroom I teach the exact DeepSeek V4 agent workflow — system prompts, cost routing, local-vs-API decisions, n8n automations. 3,000+ members, weekly live coaching. → Get access here

Learn how I make these videos 👉

Get a FREE AI Course + Community + 1,000 AI Agents 👉

Closing Thoughts

DeepSeek V4 is not the best model on the market — but it is by far the most interesting release this quarter, and this deepseek v4 tutorial should give you everything you need to decide if it fits your stack.