Hermes Gemma 4 cut my API bill in half last month. No joke.

Let me tell you what happened.

I was running Hermes all day for content, research, code review, automation — the usual.

Every single session was burning through cloud API tokens.

I looked at my bill.

Winced.

Then Gemma 4 dropped from Google as the latest open-source lightweight model.

I plugged it into Hermes.

And now half my agent workload runs for free on my own laptop.

This article is the complete "how" — plus the honest "why I'd bother" and "when I'd still pay for cloud."

No fluff. Just what I've learned.

The Core Idea

Gemma 4 is Google's latest open-source lightweight efficient model.

It's designed to run on normal hardware — not data centres.

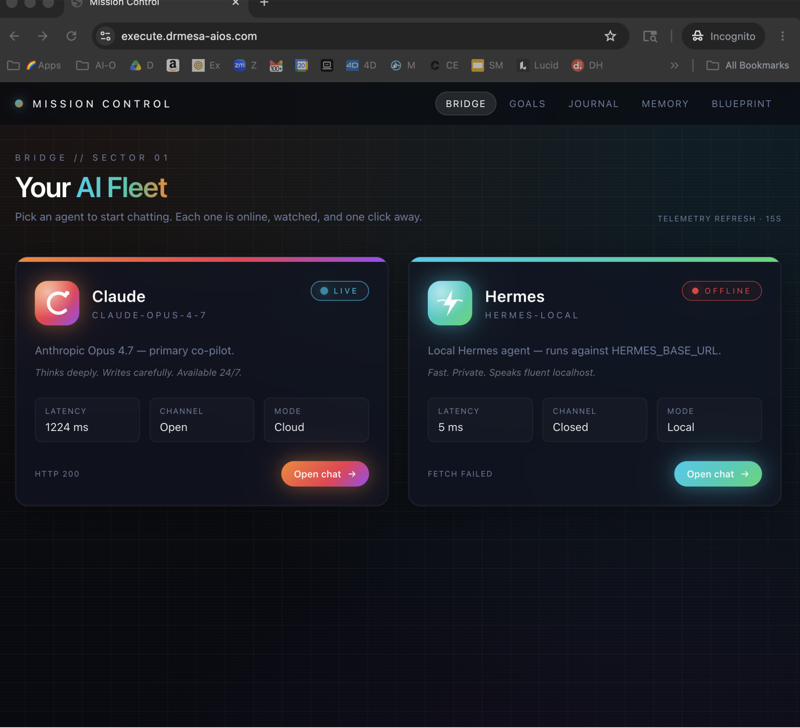

Hermes is the AI agent that orchestrates your work.

Put them together with Ollama in the middle, and you've got a completely free local AI agent stack.

Key things that matter about Hermes Gemma 4:

- Runs 100% locally on your machine

- No API fees, no rate limits

- The 18GB and 20GB variants ship with 256K context — bigger than MiniMax M2.7 and most frontier cloud models

- Works brilliantly as sub-agents under a cloud-powered orchestrator

- Takes about 5 minutes to set up

That last one matters because a lot of "local AI" setups are a weekend project. This isn't.

How I Actually Use Hermes Gemma 4

I want to ground this in reality before we get into the setup.

Here's my real workflow:

Morning: Orchestrator Hermes session, pointed at Claude Opus 4.7 for the big reasoning. I'm planning, writing, strategising.

Midday: 10-20 sub-agent Hermes sessions firing off in parallel, all pointed at local Gemma 4. They're summarising research, filling content templates, classifying emails, tagging data.

Overnight: A batch Gemma 4 job running on big-context tasks — reviewing last week's transcripts, building a month-level summary, cleaning data files.

Total API cost for the sub-agent and batch work: £0.

Total API cost for the orchestrator: whatever Claude charges me that day.

Before Gemma 4, I was paying for all of it.

Now I only pay for the hardest 20%.

That's the real value of Hermes Gemma 4.

I went into the multi-session workflow more in Hermes agent workspace if you want the bigger picture.

The Setup — In Plain English

1. Install Ollama

Go to ollama.com.

Copy the install command they show you.

Paste into terminal. Hit enter.

Ollama is now running in the background.

Verify with ollama list — if it returns clean, you're good.

2. Install Gemma 4

On ollama.com, go to Models. Click Gemma 4.

Pick a variant:

- 128K-context smaller Gemma 4 → good default, runs on most machines

- 256K-context 18GB or 20GB Gemma 4 → the big one. Needs beefier hardware but worth it.

Copy the install command on that page. Paste it. Run it. Wait for download.

Done.

3. Start Hermes And Run hermes model

Open a new Hermes chat.

Run:

hermes model

A list of model endpoints appears.

4. Select Custom Endpoint

Scroll through the list.

Select Custom Endpoint — the one where you enter the URL manually.

5. Paste The Ollama URL

Hermes asks for a URL.

Paste http://localhost:11434 (the default Ollama URL).

Crucial: Ollama must already be running in the background. If you've rebooted, wake it up first.

6. API Key

Hermes asks for an API key next.

Two totally fine options:

- Leave blank

- Type "Ollama"

Hit enter.

7. Pick Gemma 4 And Run

Hermes queries Ollama, pulls the list of installed models, and shows them.

Select Gemma 4 latest.

Leave the next prompt blank.

Run Hermes.

That's the whole thing. Nobody else on the internet explains this as simply.

🔥 Want a screenshot-by-screenshot walkthrough? Inside the AI Profit Boardroom, I've got a full Hermes agent section with step-by-step video tutorials showing exactly this setup, plus daily SOP training — yesterday I walked through the brand new Hermes 0.7 update. Weekly coaching calls where you share your screen and get direct feedback. 3,000+ members already inside. → Grab access here

The Command Summary

For copy-paste people:

# 1. Install Ollama from ollama.com

# 2. Pull Gemma 4 (command from ollama.com Models page)

# 3. In Hermes:

hermes model

→ select Custom Endpoint

→ paste http://localhost:11434

→ API key: Ollama (or leave blank)

→ select Gemma 4 latest

→ leave next prompt blank

→ run Hermes

That's Hermes Gemma 4.

Why 256K Context Is Unfair

I can't overstate this.

The big Gemma 4 variants (18GB, 20GB) ship with 256K context.

That's bigger than MiniMax M2.7.

Bigger than a lot of frontier cloud models.

On your local machine.

For free.

What that means in practice:

- Dump a 500-page PDF. Gemma 4 reads all of it at once.

- Feed a whole codebase. It reasons across every file.

- Run a Hermes sub-agent on a 3-hour research task without the context window filling up.

When cloud providers charge you a premium for large context windows, you've now got bigger-than-theirs on your laptop.

This wasn't possible a year ago.

It is now.

Where Hermes Gemma 4 Shines

I'll be honest about where this setup wins:

Sub-agent work. The number one use case. Parallel Hermes sub-agents running Gemma 4 cost nothing and never rate-limit.

Privacy-sensitive tasks. Client data, internal docs, anything you don't want hitting a cloud API. Gemma 4 runs locally — nothing leaves your machine.

Bulk low-intelligence jobs. Classifying emails. Tagging content. Filling templates. Summarising meeting notes. Gemma 4 is plenty smart for this.

Massive context jobs. Anywhere you need 150K+ context without paying cloud premiums.

Learning and experimenting. Zero cost means you can bash on it for hours without the meter ticking.

Where I Still Reach For Cloud

Equally honest:

Hardest reasoning tasks. Claude Opus 4.7 and MiniMax M2.7 are still sharper when the task actually requires deep thought.

Agentic tool-heavy workflows. MiniMax 2.7 is literally built to run tools — it's self-improving and agentic by design.

Client-facing high-stakes content. The final polish layer still gets a frontier model.

You can run MiniMax 2.7 through the same Hermes custom endpoint flow — hermes model → custom endpoint → type "2" → leave blank → run Hermes. Done.

I talked more about the OpenClaw + cloud combo in OpenClaw Byterover.

Troubleshooting — Real Problems I've Seen

Ollama isn't responding. Run ollama list in a separate terminal. If it errors, restart Ollama.

Gemma 4 not in Hermes list. Your install didn't finish. Go back to ollama.com, re-run the install command.

Machine overheating. You grabbed a variant too big for your hardware. Drop to a smaller Gemma 4 variant.

Outputs feel flat. Local models want more prompt structure than cloud. Give Gemma 4 examples, specify format clearly.

"Connection refused" error. Wrong URL. It should be http://localhost:11434 unless you've specifically reconfigured Ollama.

FAQ — Hermes Gemma 4

What is Gemma 4?

Google's latest open-source lightweight efficient AI model, designed to run on consumer hardware. Released as an open weights release, so you can run it locally for free.

How do I connect Hermes to Gemma 4?

Install Ollama, pull Gemma 4 via ollama.com, then in Hermes run hermes model, select Custom Endpoint, paste the Ollama URL, set API key to "Ollama" (or blank), and pick Gemma 4 latest from the model list.

Is Hermes Gemma 4 free forever?

Yes. Gemma 4 is open-source and Ollama hosts it locally. There are no API fees at any point — just your electricity cost.

Can Hermes Gemma 4 replace cloud AI?

Partially. Use it for sub-agents, bulk work, private data, and large-context jobs. Keep a cloud model like Claude Opus 4.7 or MiniMax M2.7 for your hardest reasoning tasks.

What's the context window on Hermes Gemma 4?

128K on smaller variants. 256K on the 18GB and 20GB variants — bigger than MiniMax M2.7 and many frontier cloud models.

Can Hermes Gemma 4 run tools?

Yes. Gemma 4 is built to be agentic — it handles tool calls, works with Hermes sub-agents, and can run autonomous workflows.

Related Reading

- Hermes agent workspace — the multi-session workflow context

- Ollama + Hermes — the foundational Ollama setup

- OpenClaw Byterover — pairing agents with other tooling

Last Thought

Hermes Gemma 4 is the easiest win I've had on AI costs in a long time.

Install Ollama.

Pull Gemma 4.

Wire it up via Hermes' custom endpoint.

Start running your sub-agents for free.

Save the cloud budget for the 20% of tasks that actually need it.

🚀 Ready to get serious about your AI workflow? Inside the AI Profit Boardroom there's a 2-hour Hermes course, a 6-hour OpenClaw course, daily SOPs, weekly coaching calls, 3,000+ members, and 145 pages of member wins. The classroom has the best trainings on AI automation on the internet. → Come inside

Video notes + links to the tools 👉 https://www.skool.com/ai-profit-lab-7462/about

Learn how I make these videos 👉 https://aiprofitboardroom.com/

Get a FREE AI Course + Community + 1,000 AI Agents 👉 https://www.skool.com/ai-seo-with-julian-goldie-1553/about

Run Hermes Gemma 4 tonight. Your API bill next month will thank you.