DeepSeek V4 Ollama or DeepSeek API direct — that's the question I've been asked thirty times this week, so let me settle it once and for all.

Short answer: Ollama wins for almost everyone. Here's the long answer.

There are basically three ways to use DeepSeek V4 right now.

- The official DeepSeek web chat at chat.deepseek.com

- The DeepSeek API directly

- DeepSeek V4 Flash through Ollama (cloud model)

Each one has a use case. But for the way I work — and probably the way you work — option 3 is the answer 9 times out of 10.

Let me explain why.

Option 1: The Web Chat (chat.deepseek.com)

The web chat is fine. It's a chat box. You type, it responds.

What it can't do:

- Browse the web for you

- Run shell commands

- Schedule tasks

- Pipe into other tools

- Drive a browser

- Build full code projects

It's a chat interface. End of capability list.

For a quick question, it's perfectly serviceable. For anything agentic, useless.

Option 2: The DeepSeek API Direct

Now we're getting somewhere. The official API gives you raw model access.

You can build whatever you want on top of it.

But here's the thing — to actually use it, you need to either:

- Build your own harness (loops, tool use, retries — weeks of work)

- Buy or use someone else's harness that's wired for DeepSeek's specific API format

Most popular harnesses (Claude Code, OpenClaw, Hermes, Codex, Open Code) speak Ollama as their default local-model interface.

So if you go API-direct, you're either coding your own agent loop or fighting compatibility issues.

Plus you're paying per token. Even at DeepSeek's low prices, that adds up.

Option 3: DeepSeek V4 Ollama (The Winner)

Here's the magic.

DeepSeek V4 Flash is a cloud model inside Ollama. You install Ollama, run one command, and you've got the same DeepSeek V4 brain — but accessed through the standard Ollama interface.

Why that matters:

- Free within generous tier limits (no API bill)

- No local hardware needed (cloud-hosted)

- Every Ollama-compatible harness works instantly — Claude Code, OpenClaw, Hermes, Codex, Open Code

- One command install:

ollama run deepseek-v4-flash

You get the model, the harness compatibility, and the zero cost — all in one move.

That's why DeepSeek V4 Ollama is the winner for everyone except people building production-scale infrastructure who genuinely need the raw API.

🔥 Want my exact DeepSeek V4 Ollama configs for Claude Code, OpenClaw and Hermes? Inside the AI Profit Boardroom I've recorded the full setup videos for each harness pointed at DeepSeek V4 Ollama, plus weekly coaching calls where I'll debug yours live. 3,000+ members already running this stack. → Get access here

DeepSeek V4 Ollama Side-by-Side: The Honest Comparison

Let me lay it out clean.

Setup time

- Web chat: 0 seconds (just visit the site)

- API direct: 30+ minutes (account, keys, code wiring)

- DeepSeek V4 Ollama: 4 minutes (install, run)

Cost

- Web chat: free (with limits)

- API direct: pay per token

- DeepSeek V4 Ollama: free (with generous limits)

Agentic capability

- Web chat: none

- API direct: as much as you build

- DeepSeek V4 Ollama: instant via existing harnesses

Browser automation (e.g. via OpenClaw)

- Web chat: impossible

- API direct: possible after harness build

- DeepSeek V4 Ollama: works out of the box

Scheduled agents (e.g. via Hermes)

- Web chat: impossible

- API direct: possible after harness build

- DeepSeek V4 Ollama: works out of the box

You see the pattern.

The Ollama route gives you everything the API gives you, plus instant compatibility with the harnesses everyone's using, minus the bill.

The "But What About Privacy?" Question

Fair question.

DeepSeek V4 Ollama still hits cloud servers — your prompts go to Ollama's hosted infrastructure, which forwards to the model.

If you have strict data sensitivity requirements, you need a true local model — something like Llama 3 or a local DeepSeek variant downloaded to your machine.

For 95% of work — research, drafting, coding, browser automation — the cloud trip is fine.

If you need fully local, that's a different walkthrough — start with Claude Code local and build from there.

What the Same Model Can Do in Different Bodies

This is the bit nobody explains properly.

DeepSeek V4 raw on chat.deepseek.com? Chatbot.

DeepSeek V4 through API + your custom code? Whatever you build.

DeepSeek V4 Ollama + Claude Code? Coding agent with planning loop.

DeepSeek V4 Ollama + OpenClaw? Live browser automation.

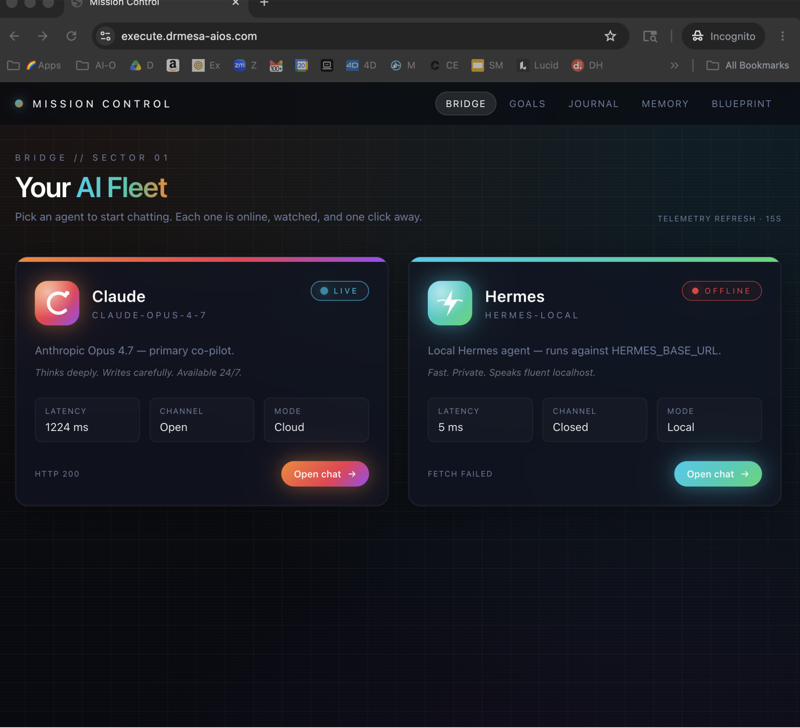

DeepSeek V4 Ollama + Hermes? Scheduled research agent.

DeepSeek V4 Ollama + Codex? Code generator that builds full projects.

Same model. Different harness. Wildly different capability.

That's the unlock — the harness controls the API calls, the tool use, the loops. The model is just the brain.

DeepSeek V4 Ollama gives you the brain in the most universally-compatible format possible.

My Real DeepSeek V4 Ollama Workflow

In a typical day I use DeepSeek V4 Ollama through:

- Claude Code for refactoring sessions (paired well with the workflow in Claude Code AI SEO)

- OpenClaw for one-off browser tasks

- Hermes for the daily news research agent

- Raw Ollama chat for quick "explain this regex" questions

I don't touch the API direct anymore unless I'm building something custom that needs fine control.

I don't use chat.deepseek.com because I lose the harness layer.

When the API Direct Actually Wins

Be fair to the API.

It wins when:

- You're building a production product with thousands of users

- You need fine-grained billing visibility

- You need specific API features Ollama doesn't expose yet

- You need to hit DeepSeek's largest non-Flash variants

For those cases, go API.

For everyone else — solo operators, agency owners, indie builders, content creators — DeepSeek V4 Ollama is the move.

Want help picking the right harness for YOUR workflow? Inside the AI Profit Boardroom I run weekly coaching calls where you can share screen and I'll help you wire DeepSeek V4 Ollama into the harness that fits what you're trying to build. → Join here

Related reading

- DeepSeek V4 Tutorial — the model deep-dive

- Claude Code Free — the harness primer

- Ollama + Hermes — scheduled agents on free Ollama models

FAQ

Is DeepSeek V4 Ollama actually faster than the API direct?

For most use cases, response time is comparable — both are calling cloud infrastructure. The Ollama route saves you the boilerplate code time, which is where the real speed-up lives.

Can I switch from DeepSeek V4 Ollama to API direct later?

Yes — start on Ollama to test, swap to API direct if you need scale or specific features. The model itself is the same; only the access path changes.

Does DeepSeek V4 Ollama have lower-quality output than the API?

No. Same underlying model. Ollama just provides the access wrapper. Quality is identical.

Why would anyone use chat.deepseek.com over DeepSeek V4 Ollama?

Honestly, only if they want a one-off quick chat with no setup. Once you've got Ollama installed (4 minutes), there's no real reason to go back to the web chat.

Is DeepSeek V4 Ollama secure for client work?

For most agency/freelance work, yes. For regulated industries (health, finance, legal) where data residency matters, look at fully local models or DeepSeek's enterprise tier instead.

What happens if Ollama removes the free tier?

Possible. While it's free, I'm using it heavily and saving the playbooks. If they monetise, the harnesses still work — you'd just point them at the paid version or swap models.

Get a FREE AI Course + Community + 1,000 AI Agents 👉 Join here

Video notes + links to the tools 👉 Boardroom

Learn how I make these videos 👉 aiprofitboardroom.com

DeepSeek V4 Ollama beats the API direct route for almost every solo operator and indie builder I know — install it today and you'll see why I stopped paying for tokens.