Generic Agent uses under 30,000 tokens per run.

Most AI agent frameworks burn 200,000 to 1,000,000 tokens for the same work.

That's a 10-30x efficiency improvement.

If you've ever stared at an OpenAI bill and wondered where the money went — this post is for you.

I'm going to break down exactly how Generic Agent pulls off the token efficiency, why it matters in practical terms, and what it means for the future of AI agents.

The Token Bill That Killed My Last AutoGPT Setup

Let me start with a war story.

Six months ago I was running AutoGPT for a content automation pipeline.

Worked fine.

Did the job.

Cost me £400 a month in OpenAI tokens.

Looked at the bill, broke it down, realised about 70% of the tokens were context — the agent was reloading the same workflow context every single run.

Burning tokens to relearn what it already knew.

That's the AutoGPT problem in one paragraph.

Generic Agent solves it with three architecture choices that matter.

🔥 Want my full Generic Agent + low-token AI stack? Inside the AI Profit Boardroom I've put together a low-cost AI agent track — Generic Agent setup, the prompts that stay token-efficient, and the multi-tool stack that runs at 1/10th the cost of standard AutoGPT setups. 3,000+ members already running lean AI automations. Click below. → Get the low-token AI playbook

Generic Agent Architecture Choice 1 — Memory Layering

Generic Agent doesn't load all your history into context every run.

It layers memory into tiers.

Tier 1 — active task context (loaded every run, kept tight)

Tier 2 — recently used skills (loaded if relevant)

Tier 3 — archived skills (only loaded when explicitly referenced)

Most of the time only Tier 1 is in active context.

That's why the token count stays under 30K — most of your knowledge sits in cold storage until needed.

AutoGPT and similar frameworks load everything into hot context every time.

That's the difference.

If you want the comparison piece, my DeepSeek V4 Ollama setup shows the same memory-layering principle applied to a local AI stack.

Architecture Choice 2 — Context Compression

Even Tier 1 context gets compressed before being sent to the model.

Generic Agent uses summarisation passes to turn long task histories into short, dense representations.

Instead of sending the full transcript of "agent did A, then B, then C, then noticed D, then..." it sends "Goal: X. Status: Y. Next: Z."

Same information.

A fraction of the tokens.

The research team behind Generic Agent calls this context information density — the idea that a smaller, denser, more focused context outperforms a massive bloated one.

In my testing, this compression alone accounts for about 60% of the token savings.

The rest comes from selective loading and skill reuse.

I covered the context compression idea from a different angle in my Claude code free post — same pattern, different toolchain.

Architecture Choice 3 — Selective Loading

This is the one that completes the trick.

Generic Agent doesn't blindly load skills "just in case" — it loads skills based on what the current task actually requires.

If you ask it to scrape a webpage, it loads the browser skill.

It does NOT load the email skill, the file management skill, the outreach skill, or any of the other 47 skills you might have.

Compare to a typical AutoGPT setup where the system prompt lists every available tool, every example, and every guardrail upfront.

That's why AutoGPT runs blow past 500K tokens — the prompt itself is enormous before the task even starts.

Selective loading keeps Generic Agent's prompt tight regardless of how big your skill tree gets.

You can have 200 skills installed and the active prompt stays under 5K tokens.

What 30K Tokens Means In Real Money

Let's do the math.

Claude Sonnet 4 — roughly £2.40 per million input tokens, £12 per million output tokens.

AutoGPT-style run — 500K input + 50K output = £1.20 + £0.60 = £1.80 per run.

Generic Agent run — 25K input + 5K output = £0.06 + £0.06 = £0.12 per run.

That's 15x cheaper.

Run 100 tasks per day — AutoGPT costs you £180/day, Generic Agent costs £12/day.

Over a month — £5,400 vs £360.

Over a year — £65,000 vs £4,300.

That gap buys you a car.

If you're running real automation at scale, this matters.

The Hidden Win — Speed

Token efficiency isn't just about cost.

It's also about speed.

Models are slower with longer prompts.

A 500K token prompt takes 5-10 seconds just for the model to process before it generates output.

A 30K token prompt processes in under a second.

Multiply across 100 runs per day and Generic Agent saves you hours of wall-clock time, not just money.

That's the second-order benefit nobody talks about.

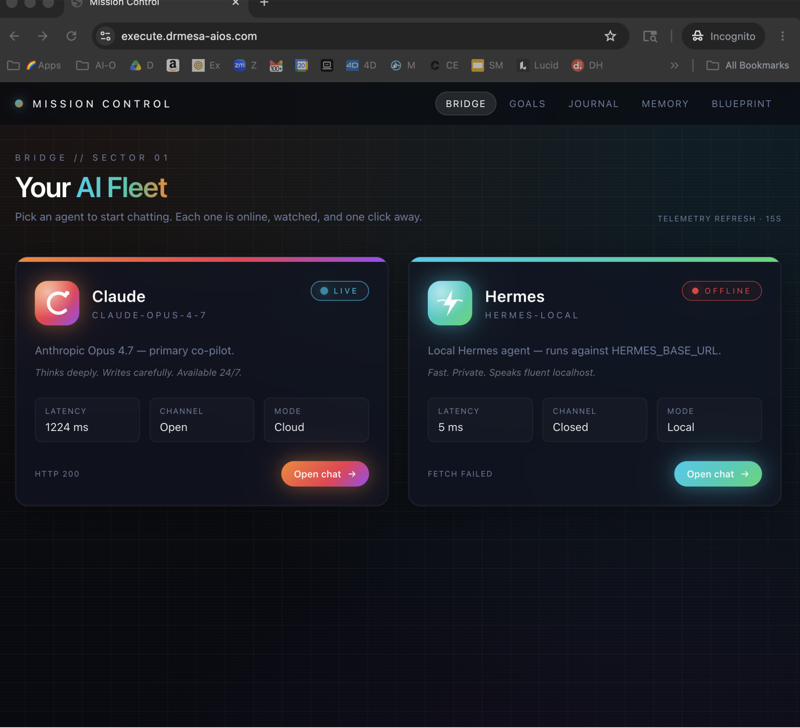

If you want the full speed-vs-cost breakdown, my Hermes Gemma 4 post covers a similar architectural shift in a different agent.

🔥 Want my exact Generic Agent token-efficiency configuration? Inside the AI Profit Boardroom I've documented the config tweaks, the skill tree organisation that minimises selective loading overhead, and the prompts that stay tight. Plus weekly coaching where you can show me your token bill and I'll find the leaks. Click below. → Get the token efficiency config

The Generic Agent 100-Line Core

Here's the part that should blow your mind.

The core agent loop in Generic Agent is about 100 lines of code.

Plus atomic tools.

That's it.

No bloated framework.

No thousands of dependencies.

A tiny minimal seed that grows into something powerful as it accumulates skills.

Compare to LangChain — tens of thousands of lines, dozens of dependencies, complex abstractions.

Or AutoGPT — meaningful framework, lots of moving parts.

Generic Agent went the other direction — small core, big skill tree.

The whole industry has been racing toward bigger and more complex.

Generic Agent went smaller, leaner, smarter.

That's a cultural shift, not just a technical one.

What This Means For The Industry

If Generic Agent's architecture wins, three things happen.

One — agent costs collapse. Running an AI agent stops feeling like running a luxury car. Becomes the price of cup of coffee per month.

Two — agents become accessible. Cost was the barrier for most businesses. Drop the cost 15x and you unlock a much bigger market.

Three — the engineering bias flips. Instead of "load everything just in case", the bias becomes "load only what's needed". That changes how every agent is built going forward.

Generic Agent isn't the only project pushing this direction.

Hermes Agent leans the same way.

DeepSeek's agent stack leans the same way.

But Generic Agent is the cleanest example I've seen of what context information density looks like in production.

I went deeper on the Hermes side in my Hermes vs OpenClaw breakdown — different agents, same architectural shift.

Generic Agent Token Efficiency FAQ

Does the 30K token claim include skill content?

The 30K is active context per run. The skill tree itself is much larger but most of it stays in cold storage until selectively loaded.

Will my token bill really drop 15x?

For equivalent workloads, yes — based on my testing and the published architecture. Your mileage may vary depending on task complexity.

Does context compression hurt task quality?

In my testing, no — denser context often outperforms sparse context because the model isn't distracted by irrelevant detail.

Can I tune the token budget?

Yes — selective loading thresholds and compression aggressiveness are configurable.

Does this work with smaller models?

Yes — and arguably even better, because smaller models benefit more from focused context.

What's the failure mode of selective loading?

If a task needs a skill the agent didn't load, it has to detect that, load it, and retry. Adds latency. Rare in practice but it happens.

Related Reading

- DeepSeek V4 Ollama — local low-cost AI stack

- Claude Code free — context compression in another tool

- Hermes vs OpenClaw — architecture comparison

Final Take

Generic Agent's token efficiency isn't a marketing number.

It's an architectural choice that compounds into 15x cheaper, 10x faster, infinitely more scalable AI automation.

If you're running AI agents at scale, the cost difference is real money.

If you're thinking about running AI agents, this is why the entry barrier is dropping.

Either way, watch this space.

🔥 Ready to cut your AI agent token bill by 15x? Get a FREE AI Course + Community + 1,000 AI Agents 👉 join here. Or grab the full Generic Agent token-efficiency playbook inside the AI Profit Boardroom.

Learn how I make these videos 👉 aiprofitboardroom.com

Video notes + links to the tools 👉 skool.com/ai-profit-lab-7462

Generic agent's 30K-token architecture is the unfair advantage — go and switch your stack across before everyone else catches up.