The best chapter of the OpenClaw course?

The one that shows you how to run it completely free.

No API keys.

No monthly bills.

Just Ollama and Quen 3.5 on your own machine.

Get the free local setup notes 👉

Why Run OpenClaw Locally

OpenClaw is already self-hosted.

Data stays on your machine.

But models normally sit behind an API.

Which means:

- You pay per token

- Your prompts leave your machine

- You're rate-limited by the provider

Ollama + Quen 3.5 removes all three.

Model on your laptop.

Prompts never leave.

Run as many automations as your hardware handles.

Zero cost.

The Exact OpenClaw Course Setup

Step one: install Ollama.

Go to ollama.com.

Download the installer for your OS.

Install.

Step two: pull Quen 3.5.

Open a terminal.

ollama run qwen3.5

This downloads the default 9B model.

Takes a few minutes depending on your connection.

Step three: connect OpenClaw.

ollama launch openclaw

Pick the model from the dropdown.

Done.

OpenClaw is now running free on your machine.

Which Model Size to Pick

The course recommends starting with 9B.

Fast.

Good enough for most skills.

Runs on an M1/M2 Mac without breaking a sweat.

For heavier tool-calling — bigger model.

Larger models are better at running tools.

If your machine has the RAM, try the 14B or 32B variants.

You'll feel the difference when agents chain skills together.

What Runs Well on Local Models

From my testing across the OpenClaw course automations:

Great on Quen 3.5 9B:

- SEO monitoring

- App health checks

- Reddit digests

- Calendar/email triage

Needs bigger models:

- Code generation from a PRD

- Full marketing squad outputs

- BMAD framework stories

Match the model to the task.

That's the 10k-ft lesson.

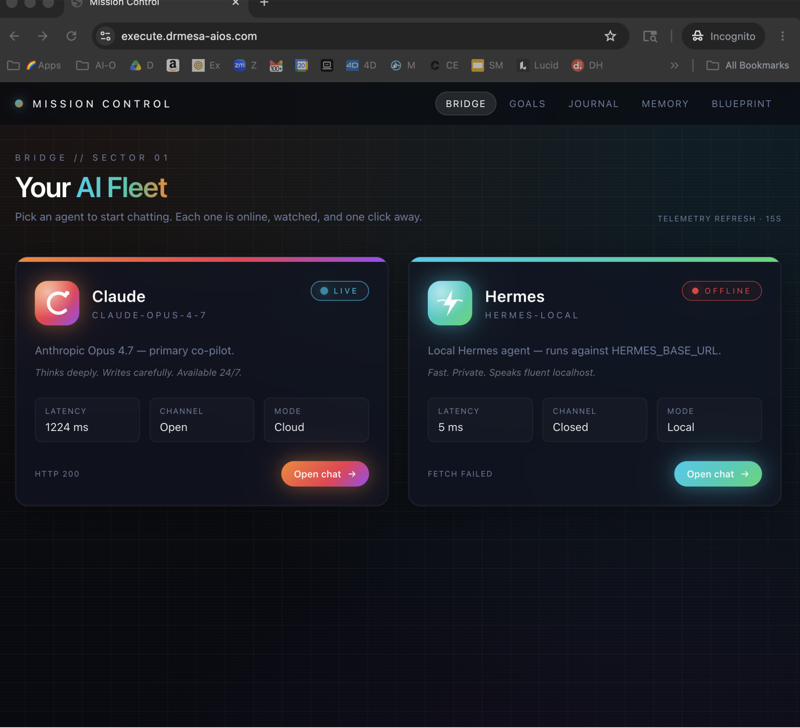

Combining Local and Remote

Here's the trick nobody talks about.

You don't have to go 100% local.

OpenClaw lets you route specific agents to specific models.

Route your cheap/frequent agents to Quen 3.5 local.

Route your one-off heavy lifts to Claude 4.6 or Gemini 3.1.

You get the best of both.

Cost drops 80-90%.

Quality stays high.

That's the real game.

Hardware Reality Check

Let's be honest.

Local models need hardware.

Minimum for Quen 3.5 9B:

- 16GB RAM

- Apple Silicon or modern NVIDIA GPU

- 20GB disk

Better setup:

- 32GB RAM

- M2 Pro/Max or RTX 4070+

- 100GB disk for multiple models

If you're on an ageing laptop, stick to remote APIs.

If you've got decent kit, local wins.

Privacy Wins Nobody Mentions

Your prompts never leave the machine.

Your skill configs stay private.

Your memory browser contents stay private.

For anyone handling client data — this is huge.

The OpenClaw course covers this in the security module but it's worth flagging here.

Combined with external secrets management, thread-bound agents and the SSRF guard — local Quen 3.5 is about as private as AI gets.

Troubleshooting From The OpenClaw Course

If OpenClaw can't find Ollama: make sure Ollama is running in the background.

If the model feels slow: close other apps, upgrade RAM, or drop to a smaller model.

If tool-calling fails: switch to a larger model. 9B struggles with complex chains.

If the gateway breaks after update: openclaw doctor fix or paste the restart gateway command.

Simple fixes.

No drama.

Why The OpenClaw Course Changes The Economics

Most AI courses teach you to rent intelligence.

The OpenClaw course teaches you to own it.

Rent means £100-500/month in API fees once you stack automations.

Own means one-time hardware cost, then unlimited runs.

Over 12 months, local pays for the laptop.

That's the ROI nobody shows you.

Join AI Profit Lab for my full cost breakdown 👉

Related Reads

FAQ

1. Is Quen 3.5 actually free? Yes. Open-weights model. Download and run as much as you want.

2. How does Quen 3.5 compare to Claude? Claude is smarter on complex reasoning. Quen 3.5 is surprisingly strong on tool-use and cheaper. For 80% of OpenClaw tasks it's good enough.

3. Do I need a GPU? Apple Silicon is fine. Intel Mac with discrete GPU works. CPU-only is slow but possible.

4. Can I run multiple models at once? Yes with enough RAM. Ollama handles model switching automatically.

5. Does local run all the OpenClaw course automations? Yes — some just run better on bigger models. Route tasks accordingly.

6. What if my laptop isn't powerful enough? Start with free-tier API models like Gemini Flash or run a small local model for cheap agents while using remote for heavy lifts.